Milena Traikovich is a seasoned expert in demand generation and performance optimization, currently focusing on the intersection of marketing technology and organizational risk. With extensive experience in analytics and lead generation, she helps businesses navigate the complexities of digital transformation while ensuring that growth does not come at the expense of security. In an era where “Shadow AI” is becoming the norm, Milena provides a strategic roadmap for leaders to bridge the governance gap and protect their brand’s integrity.

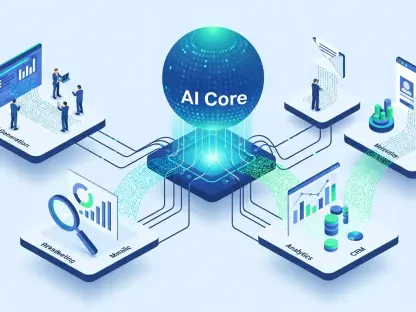

This conversation explores the reality of unregulated AI adoption in the workplace and the urgent need for structured oversight. Milena outlines practical steps for surveying current usage, implementing robust data guardrails, and establishing quality assurance protocols that scale. She also highlights the importance of maintaining a living governance policy that adapts as quickly as the technology itself.

Employees often adopt tools like ChatGPT or Claude independently before official policies exist. How do you effectively survey a team’s current usage patterns, and what specific metrics help determine if people are “figuring it out” on their own versus using tools safely?

The first rule of thumb is to never ask “if” your team is using AI, but rather to assume they already are. To get an honest picture, I recommend conducting a comprehensive survey that identifies which Large Language Models (LLMs) like Gemini or Claude are their daily favorites and whether they’ve branched out into specialized agents. You need to gauge their comfort level—are they embracing these tools with enthusiasm or resisting them out of uncertainty? A critical metric to watch is the ratio of employees who feel they have adequate guidance versus those who admit they are simply figuring it out as they go. If a large percentage of your workforce is self-taught, you likely have a visibility gap that could lead to unvetted tools entering your ecosystem without proper security checks.

Unregulated AI usage can lead to proprietary data being used for model training or legal exposure from third-party terms. What specific guardrails should be implemented for handling PII and client data, and how do you enforce these across different departments?

The risks are high because many users unknowingly agree to third-party terms that grant AI platforms rights over every single prompt they enter. To combat this, you must implement explicit guardrails that strictly prohibit the input of Personally Identifiable Information (PII), internal documents, and sensitive financial data into any non-enterprise tool. I suggest creating a visual, one-page infographic that clearly outlines these rules, as a 50-page policy document is rarely read and even more rarely followed. Enforcement involves mandating the anonymization of data before any analysis occurs and ensuring that teams understand the “why” behind compliance regulations like GDPR. By documenting which categories of information are off-limits, you transform vague concerns into actionable habits for every department.

Not all generative AI tools carry the same level of risk regarding data retention and security. How should a leadership team categorize approved versus prohibited platforms, and what criteria determine whether a tool is cleared for day-to-day use or strictly limited cases?

Categorization must be based on the level of privacy protection guaranteed by the vendor, specifically whether they use your inputs for model training. Leadership should create a tiered list: platforms cleared for general day-to-day use, tools restricted to specific use cases, and those that are strictly prohibited under any circumstances. You also need to look at the subscription level, as a free tier often has much looser data retention policies than a paid enterprise plan. This vetting process should be owned by a dedicated cross-functional team that evaluates whether a tool meets your organization’s legal and security standards. Without this clear hierarchy, your employees are left to make their own judgment calls, which can lead to conversation histories being subpoenaed or accessed during a breach.

When production volume increases through AI, brand quality can easily deteriorate without human oversight. What does a robust QA process look like for AI-generated content, and how do you define “good enough” while maintaining clear ownership of quality issues?

A robust QA process is essential because the sheer speed of AI can easily outpace your ability to maintain brand standards. You need to define exactly what “good enough” looks like for different types of output; for instance, high-stakes whitepapers require heavy editorial oversight, while internal memos might only need a lighter touch. It is vital to establish who has the final sign-off authority and to document brand voice, tone, and messaging guidelines that must be applied to every generated piece. By clearly assigning ownership of quality issues, you prevent the erosion of trust that happens when customers or stakeholders encounter generic or inaccurate content. AI should be treated as a draft-generator, but the human editor must remain the guardian of the final quality.

AI capabilities evolve so rapidly that static governance policies often become obsolete within months. What rhythm do you recommend for auditing approved tools, and how can organizations build a feedback loop where employees safely report new use cases or tools?

Governance cannot be a “one and done” exercise; it must be a living, breathing strategy that adapts to new tool capabilities. I recommend a rhythmic review cycle—ideally quarterly or semi-annually—to audit your list of approved tools and update your guardrails based on current tech trends. To make this work, you should create an open feedback loop where employees feel safe asking questions or sharing the new tools they’ve discovered. This two-way communication allows you to reinforce positive usage and catch risky behaviors early. By staying flexible and scheduling regular touchpoints, you ensure your organization isn’t relying on outdated rules for a technology that changes every few weeks.

What is your forecast for AI governance?

My forecast for AI governance is that it will shift from being a reactionary “compliance hurdle” to becoming a core competitive advantage for modern organizations. We are moving toward a future where businesses will be judged as much on their “responsible AI” credentials as they are on their product quality. I anticipate that we will see the rise of more automated governance tools that can monitor prompts in real-time, but the human element will remain the most critical factor. Organizations that successfully bridge the gap between employee experimentation and corporate safety will be the ones that scale efficiently without facing the legal or reputational fallout that comes from unregulated usage. Ultimately, governance will be less about saying “no” and more about providing the safe tracks that allow AI innovation to run at full speed.