As artificial intelligence shifts from a tool for efficiency to a tool for creation, the boundaries of personal identity are being redrawn. Milena Traikovich, a seasoned expert in demand generation and performance optimization, joins us to discuss the troubling trend of “digital twins” in the fashion industry. With her deep background in analytics and high-quality lead nurturing, she provides a unique perspective on how brands are navigating the murky waters of synthetic media and the ethical fallout of bypassing human talent.

Brands are now using AI to replicate specific physical markers like unique nose shapes, freckles, and eye colors while updating details like hairstyles. How do these technical capabilities complicate the legal definition of “likeness,” and what specific metrics determine if a digital recreation crosses into identity theft?

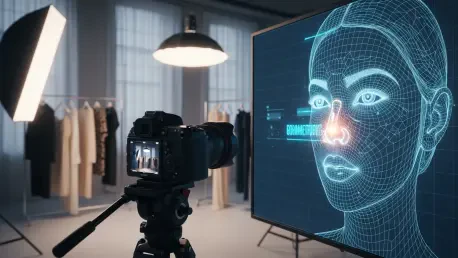

The technical ability to isolate specific biometric markers, such as the distinct bump on a nose or exact eyebrow shapes, creates a profound legal gray area. When a brand takes photos from 2023 and uses AI to update a model’s hair from a mullet to a more contemporary style, they are essentially creating a version of that person that exists in the “now” without their presence. This goes beyond simple inspiration because the metrics of similarity—matching freckle patterns and eye colors—are mathematically tied to a specific individual’s biology. We are seeing a shift where “likeness” is no longer just a flat image but a collection of data points that can be reconfigured, making it harder for current laws to define where a person’s digital rights end and a brand’s “creative” output begins.

When companies choose AI generation over hiring human models to save on labor costs, what are the long-term economic consequences for the creative industry? Could you walk us through the step-by-step process of how a brand might justify the ethical trade-offs of this practice?

The long-term economic consequence is a hollowing out of the creative middle class, where models, photographers, and stylists are replaced by a single prompt engineer. This “labor shortcut” allows a company to bypass the costs of a physical shoot, which includes daily rates, usage fees, and studio rentals, effectively stealing the value of a professional’s aesthetic without compensation. To justify this, a brand typically prioritizes scale and speed, convincing themselves that since the final AI face is “slightly changed,” it constitutes an original work. They rationalize the ethical trade-off by viewing human features as raw data rather than personal property, focusing solely on the bottom line of their performance metrics.

Given that models often discover these digital twins through social media ads without prior consent, what legal hurdles currently prevent individuals from seeking immediate compensation? What specific regulatory boundaries are needed to protect personal features from being scraped and utilized for commercial profit?

One of the biggest hurdles is the lack of clear, modern regulations specifically targeting synthetic media; many models find themselves in a “wild west” scenario where they discover their AI twins on TikTok or X by pure chance. Currently, brands can argue that because the image is “newly generated” by a machine, it doesn’t fall under traditional copyright or right-of-publicity laws that protect specific photographs. We desperately need regulatory boundaries that treat biometric data—like that unique nose bump or eye color—as protected intellectual property that cannot be scraped from the web for commercial use. Without these protections, models are essentially forced to watch their own faces sell products for companies that refuse to put them on the payroll.

Consumers are increasingly expressing a distinct “ick” factor toward AI ads that they feel lack a human story or soul. How does this shift in sentiment impact a brand’s long-term reputation, and can you share an anecdote regarding how audience backlash influences corporate marketing strategies?

This “ick” factor is a significant threat to brand equity because it signals a breach of trust between the company and its audience. When a friend sends a model an ad where she recognizes her own face, but the image feels “soulless,” it triggers a visceral negative reaction that can go viral, as we saw with the Feb. 26, 2026, TikTok report. One commenter noted that while the ad might look fine technically, the lack of a human story makes them “hate it,” which is a disaster for any brand trying to build genuine connection. This type of backlash often forces companies to pivot back to human-led campaigns as they realize that the short-term savings of AI are not worth the long-term loss of consumer loyalty and the “insulting” reputation of being a labor thief.

What is your forecast for the future of digital likeness rights in the fashion industry?

My forecast is that we will see a mandatory “certified human” movement where brands are required by law to disclose when an image is AI-generated and if it was trained on specific human likenesses. The industry will likely move toward a licensing model where models sell “digital twins” of themselves for a recurring fee, rather than having their images stolen and updated without consent. Eventually, the outcry for regulation from both creators and “violated” consumers will lead to strict policies that treat a person’s face with the same legal weight as a trademarked logo. This will ensure that the fashion world remains a place of human storytelling rather than just a collection of stolen, synthetic pixels.