The traditional barrier between creative imagination and technical execution has collapsed, replaced by a sophisticated network of intelligent systems that prioritize strategic intent over the mastery of manual labor. This review explores the fundamental transformation of video production within the current landscape, marking a definitive departure from traditional media creation. In this environment, the technical “how” has been largely automated, shifting the professional burden toward high-level creative decision-making and systemic orchestration. As the barrier to entry has vanished, the focus has pivoted toward navigating a fragmented ecosystem of competing generative models and unified technological platforms.

The Paradigm Shift in Modern Video Creation

In the years leading up to the current moment, video editing was categorized as a specialized craft requiring years of experience with complex timelines, keyframes, and color grading. However, those manual skills have now become secondary to the ability to direct an intelligent system. The emergence of high-end production tools has democratized the field, allowing anyone with a clear vision to produce cinematic-quality content without the overhead of traditional studios. This shift represents a transition from labor-intensive manual software mastery to a role that resembles that of a creative director or strategist.

The relevance of this shift in the broader technological landscape cannot be overstated. By removing the friction of technical learning curves, these systems have allowed for a massive influx of creative output. However, this democratization comes with its own set of challenges, primarily the need to distinguish quality in a market saturated with AI-generated content. Success is no longer measured by the ability to use a tool, but by the ability to manage the diverse technological inputs that constitute a modern production pipeline.

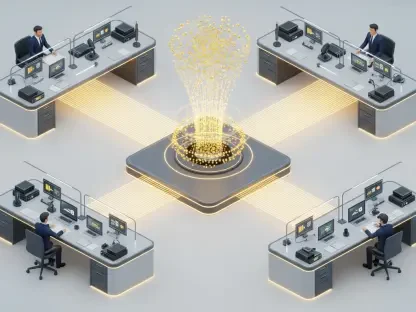

Architectural Components of AI-Driven Systems

Automated Generative Modeling

At the core of these systems lies generative modeling, which automates the most tedious aspects of production. Traditional tasks like keyframing, rotoscoping, and color grading are no longer handled by human hands; instead, they are executed by models that understand the underlying physics of light and motion. When a creator provides a simple prompt, the system does not just “draw” a scene; it simulates a three-dimensional environment where consistency is maintained across frames through deep temporal analysis. This automation allows for the creation of high-quality content in minutes, a task that previously took weeks of painstaking labor.

What makes this implementation unique is the move toward latent space manipulation, where the AI can interpret abstract concepts and translate them into visual data. For example, the system can apply the “mood” of a specific cinematic era to a raw clip without requiring manual filter adjustments. However, this relies heavily on the quality of the training data and the precision of the prompt. While the system excels at technical execution, it still requires a human hand to define the aesthetic boundaries and ensure the output aligns with the specific narrative goals of the project.

Curatorial Decision-Making Frameworks

As the “how” of production becomes invisible, the “what” and “why” have taken center stage. Creators now operate within a curatorial framework, where their primary task is to filter, refine, and steer the outputs of various generative models. This involves a high-level oversight of the production process, using personal taste and strategic vision to select the best results from a sea of AI-generated possibilities. The modern creator functions less like a technician and more like a curator of digital possibilities, blending different outputs to create a cohesive whole.

This framework is unique because it emphasizes the subjective nature of creativity over objective technical skill. A professional’s value is now found in their ability to manage a diverse array of technological inputs, ensuring that the final product feels intentional rather than accidental. This requires a deep understanding of how different AI systems interact, as well as the ability to recognize when a model is failing to capture the desired nuance. The move toward curation reflects a maturation of the medium, where the human element provides the necessary guardrails for the vast capabilities of the machine.

The Transition from Specialized Tools to Unified Platforms

The market has moved away from fragmented, individual software applications toward integrated “AI video systems” that serve as central hubs for multiple models. In the past, a creator might have used one tool for image generation, another for motion, and a third for sound. Today, unified platforms allow for a seamless transition between these tasks, acting as an operating system for the creative process. This integration is vital because it reduces the friction inherent in switching between different software environments, allowing for a more fluid and uninterrupted workflow.

Furthermore, industry behavior now prioritizes outcome-oriented workflows. Professionals are less loyal to specific software brands and more focused on the flexibility to swap underlying models as technology improves. If a new model emerges that offers better lighting or more realistic physics, a unified platform allows the creator to plug it in without relearning the entire interface. This model-agnostic approach ensures that the workflow remains future-proof, protecting the creator’s investment in the platform while allowing them to benefit from the latest breakthroughs in machine learning.

Practical Implementations and Fluid Workflows

Traditional production followed a rigid linear path consisting of pre-production, filming, and post-production. Today, that process has been replaced by an iterative, discovery-based workflow where these phases overlap. Creators often “discover” their story during the production process by generating variations of a scene in real-time. This fluid approach allows for a level of experimentation that was previously impossible due to cost and time constraints. A project can evolve from a simple idea to a finished cinematic sequence in a single afternoon, with each iteration informing the next.

A unique use case in this new era is media convergence, where the lines between static imagery and dynamic video are blurred. A common workflow involves generating a highly detailed static image to establish the aesthetic tone and then using AI layers to evolve that image into a moving scene. This method provides the creator with extreme control over the visual details while leveraging the power of generative motion. By grounding the video in a high-fidelity static base, the system avoids many of the consistency issues that plague pure video generation, resulting in a more polished and professional final product.

Addressing Market Fragmentation and Technical Hurdles

Despite the rapid progress, the industry still faces the “Fragmentation Problem.” No single tool currently excels in every category; some models are optimized for speed and social media turnover, while others focus on hyper-realism or consistent character motion. This creates a landscape of “option overload,” where professionals must decide which trade-offs they are willing to accept for a specific project. Switching between these specialized tools causes technical friction, as data formats and prompt structures often vary between platforms, slowing down the overall creative process.

Ongoing development efforts are focused on mitigating this friction by creating more standardized interfaces and better interoperability between models. The challenge lies in maintaining motion consistency across long sequences, as AI systems can still struggle with temporal coherence, leading to visual “hallucinations” or artifacts. While these hurdles are significant, the speed at which they are being addressed is unprecedented. The current market is a battlefield of competing standards, and the winners will be the systems that can offer the most consistent results with the least amount of manual intervention.

Future Outlook: Predictive Mastery and Next-Generation Models

The industry is currently anticipating the next leap in motion consistency and scene coherence, represented by the development of next-generation models like Veo4. These upcoming systems promise to resolve the remaining issues with temporal stability, allowing for longer, more complex sequences that maintain a single aesthetic throughout. For the professional creator, the future lies in “predictive mastery”—the ability to anticipate these technological shifts and adapt their workflows accordingly. Survival in this competitive field depends on staying ahead of the curve and being an early adopter of the most advanced systems.

Long-term survival in the creative sector will require a shift toward systemic adaptability. As models become more capable, the distinction between “real” and “generated” footage will continue to fade, leading to a new era of synthetic media. Professionals who can master the orchestration of these next-generation models will find themselves with unprecedented creative power. The focus will remain on future-proofing, where the ability to integrate new breakthroughs into an existing pipeline becomes the standard for professional success, ensuring that the human vision remains the driving force behind the technology.

Summary of the Technological Evolution

The evolution of AI video systems represented a fundamental shift from rigid tool mastery to systemic adaptability. This transition prioritized human creative vision by automating the technical complexities that once limited artistic expression. Professionals recognized that the value of their work moved from the execution of the frame to the orchestration of the entire system. The move toward unified platforms successfully reduced the friction of the “Fragmentation Problem,” though challenges with temporal consistency remained a point of focus for ongoing development.

Ultimately, the implementation of these systems allowed creators to focus on narrative and aesthetic goals rather than the technical limitations of traditional software. The industry moved toward a model-agnostic future where the ability to pivot and experiment became the most valuable asset. While the technology continued to advance toward perfect motion coherence, the central role of the human curator was solidified. This era marked the point where the focus of production finally returned to the core of the creative process, rendering the technical barriers of the past largely obsolete.