Milena Traikovich is a powerhouse in Demand Generation, specializing in the delicate intersection of analytics and performance optimization. With a career dedicated to transforming raw data into high-quality lead engines, she has pioneered strategies that bridge the gap between marketing’s promises and sales’ reality. Her work focuses on building “competing data layers”—systems that move beyond simple tool-stacking to create a unified, intelligent GTM motion. In this discussion, we explore the mechanics of automated qualification, the ripple effects of precise account scoring, and the shift from manual data entry to strategic, high-velocity revenue operations. We look into how precise firmographic data influences enterprise deal sizes, the automation of complex sales frameworks like MEDDPICC, and the vital role of cross-functional alignment in creating a data system that compounds in value over time.

When physical location counts and specific industry classifications are critical to deal value, how do you ensure these data points remain accurate? Could you walk through the step-by-step process of waterfalling enrichment providers and describe the specific impact precise data has on enterprise win rates?

The frustration of seeing a prospect look perfect on paper only to have the deal fall apart in practice because of a data mismatch is something every GTM leader has felt. To prevent this, we implement a robust enrichment layer that moves beyond the limitations of a single vendor. We use workflows that recategorize broad industry labels into highly specific niches using AI agents and then waterfall multiple enrichment providers to pin down elusive data like international location counts. This isn’t a one-time scrub; these enrichments run continuously to catch changes as companies restructure or expand into new markets. When you get this level of precision, the results are undeniable: top-tier enterprise accounts begin closing at a 22% higher rate. Beyond just closing more often, the deal size itself tends to be 50% larger because the sales team is targeting organizations where the product delivers the most inherent value.

Manually extracting MEDDPICC insights from call transcripts often leads to inconsistent data and coaching delays. How can organizations automate this extraction to save hundreds of hours monthly, and what specific evidence should be pulled from conversations to help managers coach their teams more effectively?

In most organizations, competitive intelligence is essentially locked inside a black box of call transcripts, with managers only having the bandwidth to review maybe 10% of total conversations. We break that bottleneck by pulling every recorded call into a structured table where task-specific prompts parse the transcript specifically for MEDDPICC dimensions. The system doesn’t just check a box; it pulls the actual supporting evidence—the specific phrases or concerns raised by the prospect—and writes it back to the CRM. This transition alone saves the sales organization over 200 hours a month, which is a massive amount of reclaimed selling time. Managers can finally stop acting as “data hygiene police” and instead spend their coaching sessions discussing the actual state of the deal, supported by over 1,800 AI-generated account summaries and 700+ calls scored monthly for structured feedback.

Many inbound leads arrive with missing job titles, causing them to be improperly scored or ignored. How do you implement a five-minute enrichment window to prevent these routing errors, and how does matching leads to existing accounts change the way a representative prepares for an initial conversation?

It is a silent revenue killer when 27% of your inbound leads arrive without a job title, causing them to score as a zero and drop into a low-quality nurture track. We solve this by enforcing a strict five-minute enrichment window where, the moment a form is submitted, the system validates the lead’s professional identity before any notification fires to a rep. During this same window, we match roughly 15% of inbound leads to existing accounts already in the system, ensuring they go to the right account owner rather than a general queue. This allows a representative to step into that first conversation with deep context on the account’s history and the lead’s specific role. It replaces that awkward, generic discovery phase with a high-value, informed consultation that builds immediate trust with the buyer.

Webinar follow-up often stalls for days while teams manually scrub attendee lists. What is the framework for automatically tiering these leads into hot, warm, and cold categories, and how does an automated alert system help increase lead coverage from half to nearly all attendees?

The “webinar hangover” usually lasts two to three days while marketing ops manually scrubs lists, which is far too long in a world where speed-to-lead is everything. We’ve built a framework that automatically scores every attendee into hot, warm, and cold tiers the moment the event ends, triggering immediate sequences for the cold leads and high-priority alerts for the rest. By removing the manual export and scrubbing process, we’ve seen lead coverage jump from a measly 50% to over 90%, ensuring that almost no one who expressed interest is left on the table. The automated alerts provide reps with the specific context of the attendee’s engagement, turning a cold follow-up into a warm, relevant extension of the webinar topic. It creates a seamless experience for the prospect and removes any ambiguity for the sales team regarding who is responsible for the next move.

Building a unified data layer requires alignment between sales, marketing, and operations leadership. How should a GTM engineer structure a cross-functional working group to prioritize new workflows, and what are the long-term benefits of treating data as a compounding system rather than a collection of tools?

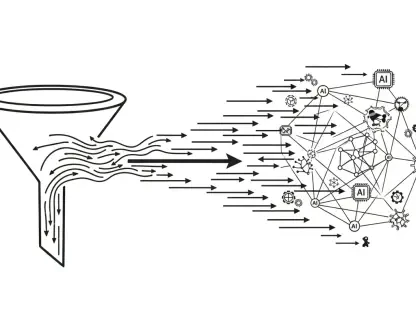

To make this successful, you have to move away from a reactive “ticket-taking” mentality and toward a biweekly working group that includes the CMO, VP of Sales, and VP of RevOps. This group focuses on the high-level strategy of the GTM engine, shifting from triaging a backlog of errors to planning proactive workflows like living intent scoring. When you treat your data as a compounding system, you stop buying five different tools to solve five different problems and start building one infrastructure that answers every question. The long-term benefit is a sense of organizational relief; marketing gets cleaner attribution, sales gets better-prioritized accounts, and leadership finally has a data layer they can trust for forecasting. It turns your tech stack into a flywheel where every new workflow makes the entire system smarter and more efficient.

What is your forecast for the role of the GTM Engineer in the next five years?

In the next five years, the GTM Engineer will transition from being a specialized “technical builder” in the background to becoming the central architect of a company’s growth strategy. We will see the role move away from simply connecting APIs and toward designing autonomous “revenue nervous systems” that can sense market shifts and reallocate sales resources in real-time. I expect GTM Engineers to lead the charge in moving companies away from static, quarterly territory planning and toward a fluid, weekly ranking of accounts based on real-time intent and signals. The most successful organizations won’t just have the best product; they will have the most sophisticated GTM infrastructure that allows them to find and engage the right buyers before their competitors even know those buyers are in-market.